Install additional language models, for example Japanesepython -m spacy download ja_core_news_sm

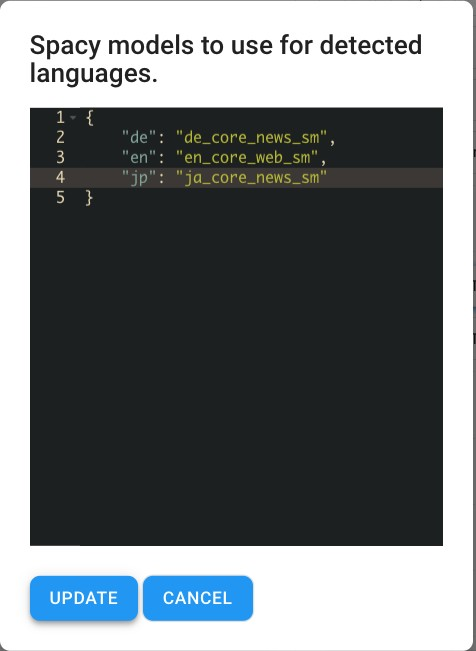

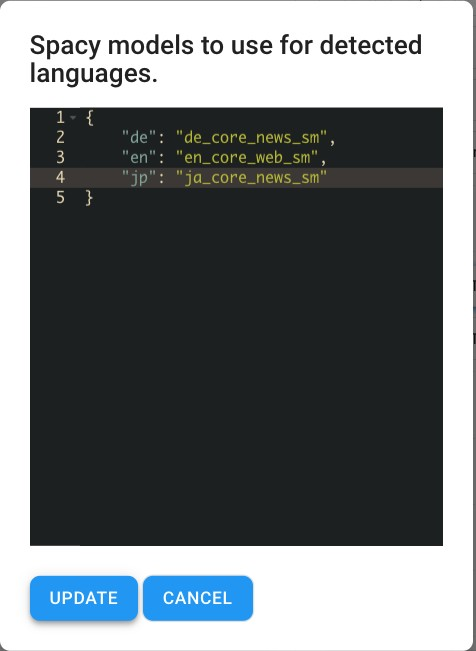

language_models : Update SpaCy language model mapping (the language code is expected to be found in facet language, see Language Detection ) .

The built-in “Nlp Keyphrase Tagger” pipes items through a configurable SpaCy Pipeline to perform Key-Phrase Extraction and additionally Named Entity Recognition as well as Rule-Based Sentiment Analysis.

The Pipelet is configurable within the pipeline Editor.

To reduce processing time of big PDFs, consider only a subset of pages.

process_pages :

dynamic : Chosen relative to document size (default)

Take at least 10 pages, but at most √total_pages

all : Take all pages.

int : Take first N pages.

Per default english (en_core_web_sm) and german (de_core_news_sm) models are installed on Squirro instances.

Install additional language models, for example Japanesepython -m spacy download ja_core_news_sm

language_models : Update SpaCy language model mapping (the language code is expected to be found in facet language, see Language Detection ) .

Extract highest ranked key-phrases based on the TextRank algorithm.

Key phrases are selected and ranked from a pool of recognised Noun Chunks and recognised Named Entities per item.

tag_phrases: Enable / Disable key-phrase tagging

tag_top_k_phrases: Amount of phrases to tag

tag_phrases: Enable / Disable key-phrase tagging

tag_top_k_phrases: Amount of phrases to tag

dynamic : Total amount of phrases selected relative to document size (between 20 - 70)

10 : Take N highest ranked phrases as specified

tag_topics: Enable simple topic-tagging based on key-phrases

Key phrases are stored within the facet:nlp_tag__phrases.

The item’s Title is also added.

Content based auto-completion (type-ahead)

Significant-terms aggregation on search results

With configuration tag_topics:True, the pool of ranked key-phrases is used to extract cleaned, deduplicated phrases referred to as “topics” (stored in facet:nlp_tag__topics).

Concept

- Filter steps:

- Remove terms with POS ["ADJ", "DET", "PUNCT"]

- Remove terms containing (almost) only number characters, like `33120x`

- De-Duplicate:

- Skip phrases that are also detected in NER-TAGS ["PRODUCT", "EVENT", "PERSON"] (configurable)

- Skip phrases that contain terms from already stored "topics"

- Select 20 phrases evenly across all ranks (as determined via TextRank) |

(Optional)

Store recognised entities within their corresponding facet, like .

tag_entities : Enable entity (NER) tagging.

collect_entities : Specify NER tags to be added. (Check support on installed Label Scheme).

tag_entities_per_type : Amount of entities (per type) to be added to their corresponding facet.

One facet per entity, like Location = [Europe, London]

Applies rule based sentiment analysis (vaderSentiment) that is specifically attuned to sentiments expressed in social media or domains like NY Times editorials, movie reviews, and product reviews.

It doesn’t require any training data but is constructed from a generalizable, valence-based, human-curated gold standard sentiment lexicon.

tag_sentiment : Enable rule-based sentiment tagging (for english language only)

Overall Sentiment Label facet:sentiment_pretrained

One sentiment label (neutral, positive, negative) per document.

Sentiment analysis is applied per sentence

Sentences with neutral sentiment are skipped

Overall Sentiment Scorefacet:nlp_tag__sentiment_score

Float value within [-1,+1]

Sentiment Assessment facet:positive_terms, facet:negative_terms

A sentiment phrase consists of the valence-term and it’s context. \

Input

"The tech provides insight into unstructured email content, it allows me to truly understand the conversation between the business and our customers. The insight gained from this analysis is significantly deeper than cam be achieved from structured data analysis” *Copied from Gartner

Output

{

'sentiment_pretrained': ['positive'],

'positive_terms': ['truly understand', 'insight gained'],

'negative_terms': [],

'nlp_tag__phrases': ['structured data analysis', 'unstructured email content' ]

} |

→ That review showcases the combined insights gained through sentiment-assessment and key-phrase extraction.

Input

“This was not a good experience”

Output

{

'sentiment_pretrained': ['negative'],

'positive_terms': [],

'negative_terms': ['not a good experience']

} |